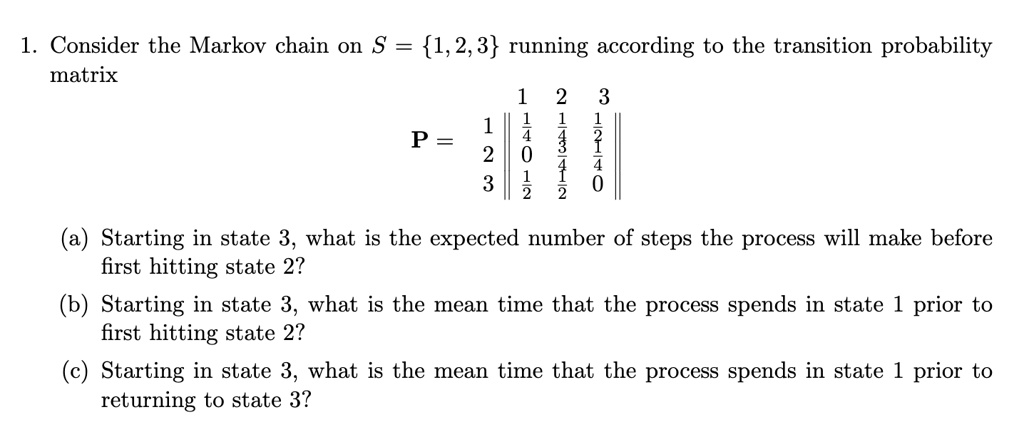

SOLVED: 1.. Consider the Markov chain on S 1,2,3 running according to the transition probability matrix 2 P = 8 3 J 2 Starting in state 3, what is the expected number

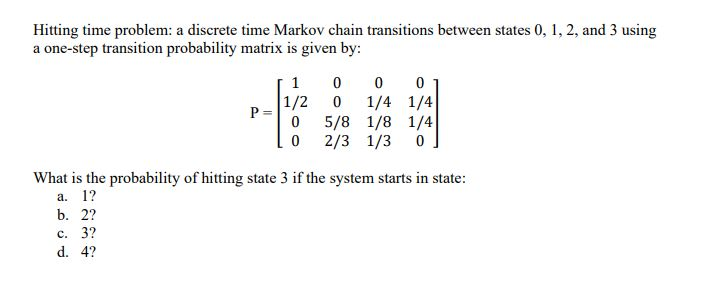

probability theory - Gambler's ruin (calculating probabilities--hitting time) - Mathematics Stack Exchange

Screenshot of hitting times distribution for Markov chains task three... | Download Scientific Diagram

probability theory - Question to a proof about hitting and first return times - Mathematics Stack Exchange

probability - Expected hitting time of a certain state in a Markov chain - Mathematics Stack Exchange

SOLVED: Problem 1 Consider the Markov chain Xn Sn=0 with infinite state space X= 0,1,2,3,4,:.. and 1-step transition probabilities 0.9 0.1 if j = i if j = i+1 otherwise Pij 1.1 [

![CS 70] Markov Chains – Hitting Time, Part 1 - YouTube CS 70] Markov Chains – Hitting Time, Part 1 - YouTube](https://i.ytimg.com/vi/_fOZXHRxYMw/maxresdefault.jpg)